Twitter Feed

US Army Cloud Computing Class at Ft. Gordon, GA

A few weeks ago I had the distinct pleassue of teaching yet another US Army cloud computing class. This time the venue was Ft. Gordon, GA and the students definitely…

78 Agency Services Identified for Cloud Transition

The Office of Management and Budget recently released a list of 78 projects slated for transition to cloud over the next year. The most common application, according to a FierceGovernmentIT,…

NGA Sets GEOINT Strategic Direction with Earth Builder

Last month Google and the National Geospatial Intelligence Agency started sharing details about their “GEOINT on Demand” collaboration. The project, named Earth Builder, was built specifically to enable NGA to…

Teleology Systems Introduces CloudeFX at DoDIIS

Next week at DoDIIS, NJVC will be showcasing a few of our government cloud computing partners. One of the most exciting of these is the Cloud Service Orchestration Framework by…

Cloud Computing Highlighted at DoDIIS 2011

Are you going to DoDIIS? Schedule for May 1-5, 2011 in Detroit, Michigan, the conference highlights the Defense Intelligence Agency’s (DIA) commitment to developing and maintaining secure and reliable networks for…

Washington DC a Cloud Computing Trendsetter!

A TechJournal South article last week named Washington, DC as a leading trendsetter in cloud computing. Citing a Microsoft sponsored survey, conducted by 7th Sense research, D.C. was highlighted as particullarly receptive…

Melvin Greer Cited by IBM for Cloud Computing Innovation

Congratulations to my good friend Melvin Greer for being awarded IBM’s first ever ACE Award!! “Melvin Greer, Lockheed Martin Senior Fellow has won IBM’s first ever Awarding Customer Excellence (ACE)…

“GovCloud: The Book” Launched at National Press Club Event

As many of you know, today marked the official launch of my first book – GovCloud: Cloud Computing for the Business of Government. Today’s venue was the National Press Club…

“Cloud Musings on Forbes” Launched!!

Today I published my first post on Forbes.com!! At the invitation of Bruce Upbin, Forbes.com editor, I will be contributing posts monthly. I see this not only as an honor,…

Tech America and INSA Form Cloud Computing Advisory Groups

Last week TechAmerica announced the formation of a “cloud computing commission” to advise the White House on the current plans to steer more than $20B worth of IT services toward…

Today data has replaced money as the global currency for trade.

“McKinsey estimates that about 75 percent of the value added by data flows on the Internet accrues to “traditional” industries, especially via increases in global growth, productivity, and employment. Furthermore, the United Nations Conference on Trade and Development (UNCTAD) estimates that about 50 percent of all traded services are enabled by the technology sector, including by cross-border data flows.”

As the global economy has become fully dependent on the transformative nature of electronic data exchange, its participants have also become more protective of data’s inherent value. The rise of this data protectionism is now so acute that it threatens to restrict the flow of data across national borders. Data-residency requirements, widely used to buffer domestic technology providers from international competition, also tends to introduce delays, cost and limitations to the exchange of commerce in nearly every business sector. This impact is widespread because it is also driving:

- Laws and policies that further limit the international exchange of data;

- Regulatory guidelines and restrictions that limit the use and scope of data collection; and

- Data security controls that route and allow access to data based on user role, location and access device.

A direct consequence of these changes is that the entire business enterprise spectrum is now faced with the challenge of how to classify and label this vital commerce component.

|

|

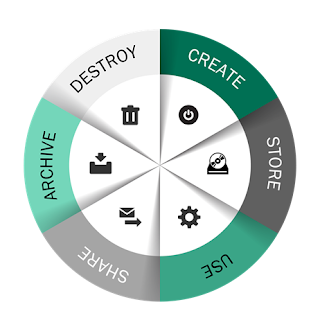

Figure 1– The data lifecycle

|

The challenges posed here are immense. Not only is there an extremely large amount of data being created everyday but businesses still need to manage and leverage their huge store of old data. This stored wealth is not static because every bit of data possesses a lifecycle through which it must be monitored, modified, shared, stored and eventually destroyed. The growing adoption and use of cloud computing technologies layers even more complexity to this mosaic. Another widely unappreciated reality being highlighted in boardrooms everywhere is how these changes are affecting business risk and internal information technology governance. Broadly lumped into cybersecurity, the sparsity of legal precedent in this domain is coupled almost daily with a need for headline driven, rapid fire business decisions.

To deal with this new reality, enterprises must standardize and optimize the complexity associated with managing data. Success in this task mandates a renewed focus on data classification, data labeling and data loss prevention. Although these data security precautions have historically been

glossed over as too expensive or too hard, the penalties and long term pain associated with a data breach incident has raised the stakes considerably. According the Global Commission on Internet Governance, the average financial cost of a single data breach could exceed $12,000,000 [1] , which includes:

- Organizational costs: $6,233,941

- Detection and Escalation Costs: $372,272

- Response Costs: $1,511,804

- Lost Business Costs: $3,827,732

- Victim Notification Cost: $523,965

So is adequate data classification still just simply a bridge too far?

While the competencies required to implement an effective data management program are significant, they are not impossible. Relevant skillsets are, in fact, foundational to the deployment of modern business automation which, in turn, represents the only economical path towards streamlining repeatable processes and reducing manual tasks. Minimum steps include:

- Improving enterprise awareness around the importance of data classification

- Abandoning outdated or realistic classification schemes in order to adopt less complex ones

- Clarifying organizational roles and responsibilities while simultaneously removing those that have been tailored to individuals

- Focus on identifying and classifying data, not data sets.

- Adopt and implement a dynamic classification model.[2]

The modern enterprise must either build these competencies in-house or work with a trusted third party to move through these steps. Since the importance of data will only increase, the task of implementing a modern data classification and modeling program is destined to become even more business critical.

( This post was brought to you by IBM Global Technology Services. For more content like this, visit Point B and Beyond.)

[2] Recommended steps adapted from “Rethinking Data Discovery And Data Classification by Heidi Shey and John Kindervag, October 1, 2014, available from IBM at https://www-01.ibm.com/common/ssi/cgi-bin/ssialias?htmlfid=WVL12363USEN

( Thank you. If you enjoyed this article, get free updates by email or RSS – © Copyright Kevin L. Jackson 2015)

Cloud Computing

- CPUcoin Expands CPU/GPU Power Sharing with Cudo Ventures Enterprise Network Partnership

- CPUcoin Expands CPU/GPU Power Sharing with Cudo Ventures Enterprise Network Partnership

- Route1 Announces Q2 2019 Financial Results

- CPUcoin Expands CPU/GPU Power Sharing with Cudo Ventures Enterprise Network Partnership

- ChannelAdvisor to Present at the D.A. Davidson 18th Annual Technology Conference

Cybersecurity

- Route1 Announces Q2 2019 Financial Results

- FIRST US BANCSHARES, INC. DECLARES CASH DIVIDEND

- Business Continuity Management Planning Solution Market is Expected to Grow ~ US$ 1.6 Bn by the end of 2029 - PMR

- Atos delivers Quantum-Learning-as-a-Service to Xofia to enable artificial intelligence solutions

- New Ares IoT Botnet discovered on Android OS based Set-Top Boxes